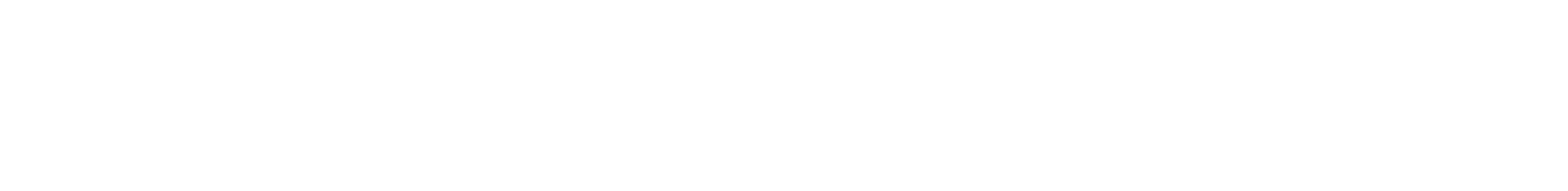

Tour Your Future

Explore future STEM careers with Tour Your Future! This program for girls and non-binary youth ages 11–17 provides the opportunity to meet female+ professionals who work in STEM fields. Participants can experience what it is like to work in a variety of industries ranging from wildlife rehabilitation to robotics and more!

Tour Your Future welcomes cisgender girls, transgender girls, non-binary youth, gender nonconforming youth, genderqueer youth, and anyone who identifies as female.

Pre-registration is required.

Cost: $13 per student

Scholarships available. Call 412.237.3400 to learn more!

Tues., June 11

10 am–Noon

CGI Pittsburgh Office

CGI Pittsburgh Office

611 William Penn Pl., Suite 1200

Pittsburgh, PA 15219

CGI is a global IT and business consulting firm with over 90,000 members. Our members provide creative technological solutions to our client’s biggest problems.

Join us as we embark on an immersive technological journey, where innovation meets education. Our tour is designed to ignite curiosity in the world of STEM and inspire the next generation of technology leaders. Explore a diverse range of STEM careers through an interactive career panel led by our team of passionate STEM experts and engage in hands-on STEM activities throughout the guided office tour.

Don’t miss this opportunity to visit CGI and get answers to your most pressing career questions!

Max: 15 Min: 3

Website: IT and business consulting services | CGI.com ![]()

Wed., June 12

1–3 pm

University of Pittsburgh

University of Pittsburgh

– Swanson School of Engineering

3700 O’Hara Street, Pittsburgh, PA 15261

Come and visit the Swanson School of Engineering where you will get a glimpse into their First-Year Engineering Program! Learn about the different engineering courses offered and ask questions about specific types of engineering that interest you. This program, in conjunction with the University of Pittsburgh, has newly renovated, state-of-the-art buildings and labs. Meet current engineering Ambassadors as they give you a tour of Benedum Hall. In the Introductory Makerspace, where all Pitt engineers receive training freshman year, engage with an activity investigating conductivity and coding using Makey-Makeys.

Capacity: 20

Website: http://www.engineering.pitt.edu/ ![]()

Thurs., June 13

1–3 pm

Highmark Health

Highmark Health

Corporate Headquarters

Fifth Avenue Place 120 Fifth Ave.

Pittsburgh PA

Headquartered in Pittsburgh, PA, Highmark Health is a national blended health organization whose mission is to create a remarkable health experience, freeing people to be their best. To support Highmark’s mission, the Enterprise Data & Analytics department creates actionable insights to enhance business decisions. To create these insights, ED&A employs data scientists, data engineers, research statisticians, actuaries, and more to analyze and showcase a vast amount of medical and insurance data. During this tour, students will hear from several different roles within ED&A with a focus on day-to-day affairs as well as the individual career pathway of the speaker. Interactive activities throughout the tour will demonstrate innovative and exciting work performed by our team. The tour will be at the Highmark Corporate Headquarters to showcase the working environment.

Max: 15 Min: 3

Website: https://www.highmarkhealth.org/hmk/index.shtml ![]()

Thurs., June 26

10 am–Noon

Hatch

Hatch

375 N. Shore Dr.

Pittsburgh, PA 15212

Hatch is passionately committed to the pursuit of a better world through positive change. We are a global network with more than 10,000 employees, and here in the United States we have more than 20 offices. We combine engineering and business knowledge and apply them to the various business units we work with. Our main engineering focuses are within metals, energy, infrastructure, and sustainability. We’re employee owned and a flat organization. As a company we’re committed to working closely with the communities we serve to ensure that our solutions optimize environmental protection, economic prosperity, social justice, and cultural vibrancy. We want their businesses, ecosystems, and communities to thrive, both now and into the future. Our Pittsburgh office serves as the corporate headquarters in the U.S. Out of the Pittsburgh office we have groups working in nuclear energy, mechanical, structural, electrical, process, steel, and metals engineering. You will be visiting with female STEM engineers in the structural, mechanical, infrastructure, and process fields. You’ll get to tour the office, meet some recent graduates, learn about the culture of Hatch, perform an experiment, enjoy lunch, then finish up with a Q & A session.

Max: 15 Min: 5

Website: https://www.hatch.com/About-Us/About-the-Company ![]()

Wed., July 10

10 am–Noon

Astrobotic

Astrobotic

1016 N. Lincoln Ave.

Pittsburgh PA 15233

Astrobotic is at the forefront of advancing space exploration and technology development. Our expertise spans from lunar rovers, landers, and infrastructure to spacecraft navigation, machine vision, and computing systems for in-space robotic applications. To date, the company has been contracted for two lunar missions, and has won more than 60 NASA, DoD, and commercial technology contracts worth more than $600 million.

We recently launched and operated the first American lunar lander mission since the Apollo Program. Beyond helping lead America back to the Moon, Astrobotic developed and operates highly reusable vertical takeoff, vertical landing (VTVL) rockets and continues to advance next-generation VTVL capabilities and advanced rocket engines. Established in 2007, Astrobotic is headquartered in Pittsburgh, PA, with a propulsion and test campus in Mojave, CA.

Students will get the opportunity to meet real spacecraft engineers, tour Astrobotic’s Pittsburgh headquarters, view the real-time development of our next lunar lander, Griffin, through windows into our large clean room, and participate in a hand-on activity with staff from Moonshot Museum.

Max: 15 Min: 3

Website: Astrobotic.com ![]()

Tues., Aug. 6

10 am–Noon

Beaver Valley Nuclear Power Station

Beaver Valley Nuclear Power Station

200 State Route 3016

Shippingport, PA 15077

The Beaver Valley Nuclear Power Station is a two-unit site, generated by two Westinghouse pressurized water reactors. We will gather in the SSB (Site Support Building) upon your arrival for conversation. We will provide a general overview of the Beaver Valley Nuclear Power Station, a brief discussion on how nuclear power is made, & open up the conversation for questions. We will take a walking tour to visit the simulator (a replica of our control room) and also to see the steam generator tent. We will then return to the SSB for additional questions and discussion.

Website: Vistra Zero – Vistra Corp. ![]()

Thurs., Aug. 8

10 am–1 pm

University of Pittsburgh Center for Vaccine Research

University of Pittsburgh Center for Vaccine Research

3501 Fifth Avenue, Biomedical Sciences Tower 3

Pittsburgh, PA

Come visit us at the Center for Vaccine Research! This is a unique opportunity to talk to the experts about VIRUSES, VACCINES and the IMMUNE RESPONSE. During the tour you will explore the laboratory space, participate in scientific experiments, learn about all the training that goes into working with pathogenic viruses and leave with a gift bag to extend your learning at home. You will meet women immunologists and virologists at all career levels and learn what it’s like to be a scientist. Most importantly you will have a chance to talk to some of the most influential women at the center and get their take on what it’s like to be a woman in science!

Max: 10 Min: 3

Website: https://www.cvr.pitt.edu/ ![]()

FAQs

Can I go on a tour? Tours are open to individuals and groups ages 11–17. Cisgender girls, transgender girls, non-binary youth, gender nonconforming youth, genderqueer youth, and anyone who identifies as female is welcome.

What will I do on a tour? Typical tours include a guided tour of the host site’s facility, a Q&A session with women+ professionals, and a hands-on activity related to the work done at the host site.

How much does a tour cost? All tours are $13 per student. A limited number of need-based scholarships for individuals and groups are available. To request a scholarship for an individual, please complete this application ![]() . To request a scholarship for a school group, please fill out this application

. To request a scholarship for a school group, please fill out this application ![]() .

.

How do I sign up? Register online or call 412.237.3400 to book your spot.

What if the tour is filled? If the tour you’re interested in is filled, please call 412.237.3400 to be added to the waitlist. You will be notified if spaces become available.

Where do I go for the tour? All tours are on site at the specified locations, or on a virtual meeting platform. These are NOT at Carnegie Science Center. After registration is complete, you will receive an informational email approximately one week prior to the tour date with specific arrival and tour details.

For Professional Women+ in STEM Fields

If you are a cisgender woman, transgender woman, non-binary individual, gender nonconforming individual, genderqueer individual, or anyone who identifies as female working in STEM, we would love to meet you! You’re just one click away from inspiring some of our next great STEM professionals! Please take the time to fill out this form ![]() to host a Tour Your Future event at your place of work.

to host a Tour Your Future event at your place of work.

Tour Your Future No-Call No-Show Policy

Participants must cancel their spot at least one week in advance if they are unable to attend. If any participant is a no-call no-show two times during a semester, that participant will be unable to register for future tours.

Questions? Please contact Maggie Fonner at FonnerM@carnegiesciencecenter.org for more info.